The Future of AI Voice Models: Trends and Innovations

The rapid advancements in AI voice models have brought us closer to a world where digital interactions feel almost human. Among the leading innovations in this space are Sesame AI’s voice models, which are renowned for their strikingly human-like qualities.

Understanding Sesame AI’s Innovative Voice Models

The Rise of Human-Sounding AI Voices

Sesame AI’s Conversational Speech Model (CSM) has set a new benchmark in AI voice technology. Unlike traditional text-to-speech systems, CSM processes text and audio context together in a single, end-to-end model. This multimodal approach allows the AI to produce speech that is not only clear but also infused with subtle vocal behaviors that convey meaning and emotion. For instance, CSM can insert natural pauses, imitate breathing, or change tone in real-time, making the interaction feel more lifelike.

Technical Deep Dive: How Sesame’s CSM Works

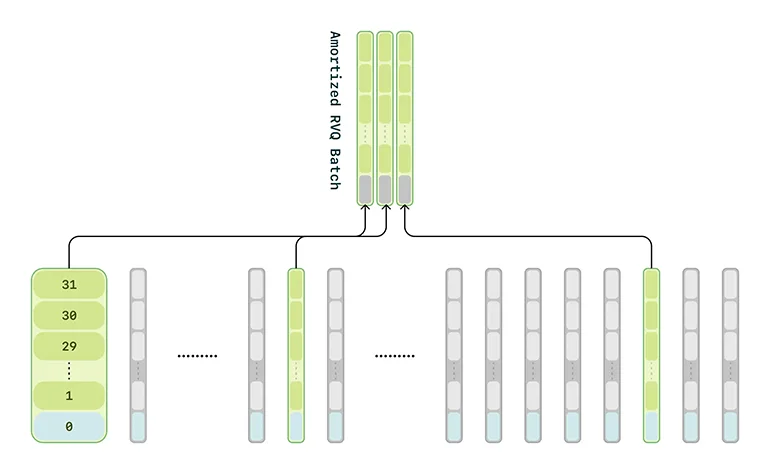

At the heart of Sesame AI’s innovation is its sophisticated architecture, which employs transformer-based models. The CSM comprises two neural networks: a "backbone" model that handles text and conversational context, and a decoder that produces fine-grained audio output. This setup allows the model to generate speech more naturally, avoiding the bottlenecks of traditional two-stage TTS systems.

Training the model involves a massive corpus of voice data, totaling roughly 1 million hours of predominantly English audio recordings. This extensive dataset helps the model learn both the words and the nuances of how those words are spoken in various situations.

| Feature | Description |

|---|---|

| Model Architecture | Transformer-based, comprising a backbone and decoder. |

| Training Data | 1 million hours of predominantly English audio. |

| Token Types | Semantic and acoustic tokens. |

| Voice Realism | Capable of emulating human-like vocal behaviors. |

| Availability | Open-sourced, accessible on GitHub under SesameAILabs. |

The Ethical Considerations of Advanced Voice AI

Alongside the technological advancements, Sesame AI has emphasized ethical considerations. The open-source release of the CSM comes with explicit guidelines prohibiting uses like impersonation, misinformation, and illegal activities. This underscores the importance of responsible innovation in a rapidly evolving field.

Did you know? SesameAI identifies and contends to the issue of voice cloning, where the voice models with realistic human speech can be easily used to record a person’s voice without his/her consent or knowledge. Always remember to be consentful and mindful of ethical guidelines when coming across AI voice models.

Pro tip: Always check for ethical guidelines and intended use cases when involving yourself in open-sourced AI models.

The Road to “Voice Presence”

Sesame AI’s overarching mission is to achieve what they call "voice presence"—that magical quality that makes spoken interactions feel real, understood, and valued. This involves more than just clear pronunciation; it requires the AI to exhibit multiple human-like qualities in tandem.

Emotional Intelligence in AI Voices

One of the key elements of "voice presence" is emotional intelligence, enabling the AI to detect and respond to human emotions. While Sesame’s CSM does not always succeed in reading emotions, it excels in detecting nuances such as sarcasm, setting it apart from older voice AI models.

Conversational Dynamics and Contextual Awareness

Another crucial aspect is the AI’s ability to manage conversational dynamics, understanding the timing and flow of dialogue. This includes knowing when to pause, when to interject, and using natural filler words or laughter. The AI can also adjust its speaking style based on the context and history of the conversation, maintaining a coherent persona over time.

Reader question: How do you think advanced AI voice models will change the way we interact with digital assistants in the future?

Potential Future Trends in AI Voice Models

Continued Improvement in Realism

As we move forward, we can expect even greater realism in AI-generated voices. This will likely involve more advanced models capable of capturing even finer nuances of human speech. Many companies will start to integrate and bars higher stakes in detecting real vs.AI-generated speech.

Integration with Wearable Technology

The trend of integrating human-sounding AI voices with wearable technology, such