Nvidia’s Blackwell GPUs are demonstrating superior AI inference capabilities, translating to potentially higher profit margins for companies leveraging them.

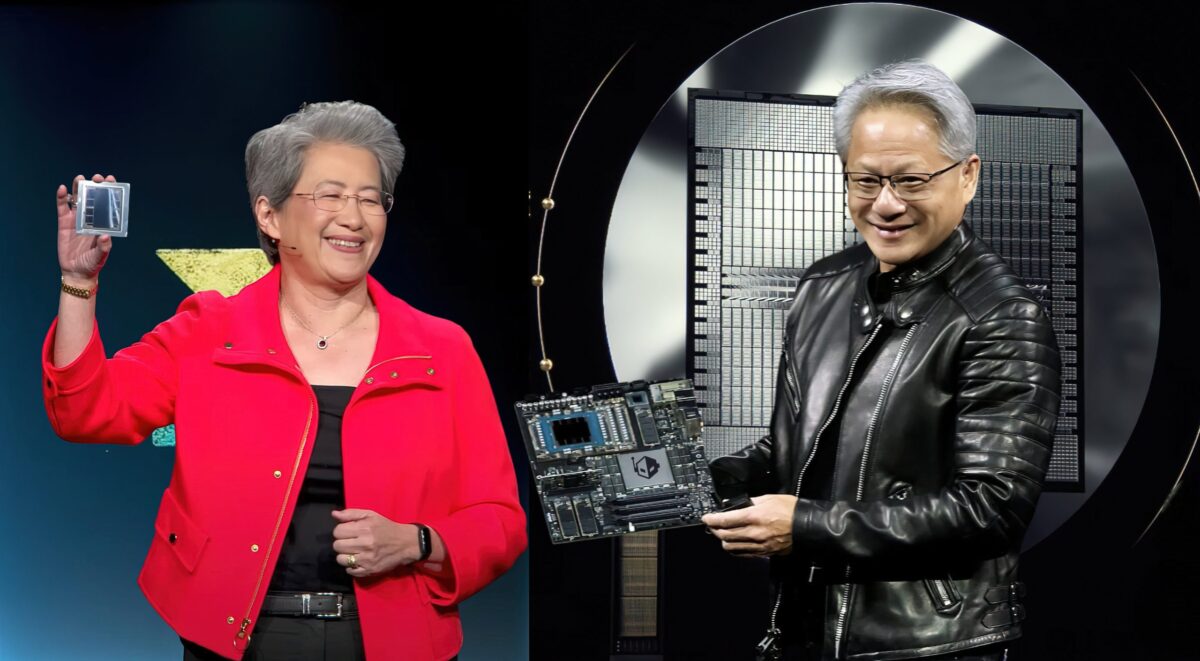

NVIDIA Software and Blackwell Architecture Lead in AI inference, AMD Striving to Catch Up

Recent analysis by Morgan Stanley Research comparing operational costs and margins related to AI inference solutions reveals that many AI inference “factories” utilizing multiple chips are achieving margins exceeding 50%. NVIDIA is teh dominant player in this high-margin sector.

AI Inference Performance In The Industry: Profit Margins Using GB200 Chips Up To 78%, Miles Ahead of AMD Due To Software Optimizations 1″ class=”wp-image-1614306″ style=”width:840px;height:auto”/>

AI Inference Performance In The Industry: Profit Margins Using GB200 Chips Up To 78%, Miles Ahead of AMD Due To Software Optimizations 1″ class=”wp-image-1614306″ style=”width:840px;height:auto”/>NVIDIA Blackwell GB200 NVL72 platform displays the highest beneficiary margin of 77.6 %, representing a profit of around 3.5 billion euros.

The evaluation involved analyzing a 100MW sample of AI factories, incorporating server racks from various suppliers, including NVIDIA, Google, AMD, AWS, and Huawei. The NVIDIA Blackwell GB200 NVL72 platform stood out with the highest beneficiary margin at 77.6%, equating to approximately 3.5 billion euros in profit.

Google’s V6E TPU chip secured second place with a 74.9% margin, followed by AWS with its ultra-service TRN2 at 62.5%. Other solutions ranged between 40% and 50%,while AMD’s results suggest significant room for enhancement.

AMD’s MI355X platform exhibited a negative profit margin of 28.2%, while the older MI300X showed -64% in AI inference. Revenue per chip indicates that NVIDIA’s GB200 chip generates €7.5 per hour, compared to only €1.7 for AMD.

Other chips earn hourly incomes ranging from €0.5 to €2.0, positioning NVIDIA in a class of its own. This NVIDIA advantage is attributed to its FP4 support and ongoing optimizations to its CUDA AI architecture.

While AMD offers capable hardware with its MI300 and MI350 platforms, there’s room for improvement, notably in the competitive AI inference space.

According to Morgan Stanley, the total cost of ownership (TCO) for MI300X platforms can reach 744 million euros, comparable to the NVIDIA GB200 platform, estimated at around 800 million euros. This TCO is a disadvantage for AMD.

The MI355X servers have an estimated TCO of 588 million euros, similar to Huawei’s Cloudmatrix 384. The cost factor may contribute to NVIDIA’s popularity, which captures 85% of the AI inference market share projected for the coming years.

NVIDIA and AMD are committed to maintaining an annual release cadence to stay competitive. NVIDIA will launch its Blackwell Ultra GPU platform, promising a 50% performance increase over the GB200, followed by the Rubin model next year. AMD plans to introduce the MI400 to compete with Rubin, while optimizing its MI400 for AI inference, setting the stage for an exciting year in this sector.