Yesterday, AMD and Meta signed a deal to deliver 6GW of AI infrastructure, mirroring AMD’s existing deal with OpenAIsigned last year. If AMD’s stock reaches $600 by 2031, it would reward Meta with 10% of the company in exchange for purchasing its GPUs. While this might seem drastic at first glance, peering deeper into the deal reveals more than initially thought.

Firstly, AMD is clearly making a play for its Instinct MI400-based systems to be used in AI data centers, despite lacking the edge that Nvidia‘s Grace Blackwell and upcoming Rubin architectures can provide. Indeed, Nvidia remains the preferred chip for those using AI accelerators for training workloads.

Last year, Meta’s AI labs shifted away from competing with frontier model development, instead channeling its efforts into developing what it calls Personal Superintelligence. In early January, the company spun up its MetaCompute organization, responsible for scaling up its data center prowess. The structure of the new organization will centralize ownership of its total tech stack. So, while Meta isn’t publicly competing with the likes of the latest frontier AI models any longer, it has a clear goal and wants to scale its AI operations up.

Meta’s plans for AI spending in 2026 could reach up to $135 billion in 2026, up from $72 billion in 2025. Just last week, the company also announced that it would use Nvidia’s NVL72 rack-scale systemsdeploying Arm-powered Grace server CPUs and Spectrum-X switches with co-packaged optics. So, Meta is clearly looking at a platform-agnostic approach when scaling its AI compute initiative, which is set to deploy tens of gigawatts of power.

It’s most likely that Meta will use the NVL72 racks to serve frontier model development, training, and bleeding-edge heavy-duty inferencing tasks. However, AMD’s place in the puzzle becomes increasingly clear when you zoom out on the strategy at scale.

Meta CEO Mark Zuckerberg clarified that the hardware deployed by AMD will primarily be used for ‘inference’ and ‘personal superintelligence’ workloads. While the inference market is currently becoming increasingly crowded with all manner of custom silicon from the likes of Sambanova, Qualcomm, and Broadcom. Meta is also understood to be developing its own ASIC, under the Meta Training and Inference Accelerator (MTIA) program, and we’ve yet to see the fruits of their efforts.

“Meta is taking a hybrid approach, with AMD becoming a major, if not primary, partner for specific AI inference workloads, while Nvidia continues to supply high-end hardware for training.” Says analyst Jon Peddie. Meta appears to be hedging its AI deployments between brands to not become solely reliant upon Nvidia’s CUDA-shaped moat.

He continued to comment that AMD’s hardware will likely serve workloads for live AI traffic, such as sticker generation and image editing. So, while Meta might not be currently playing in the ongoing frontier pool, the company is still deploying AI at scale across a range of products.

Inside AMD’s approach

While Meta clearly sees an opportunity to not only use and deploy AMD’s rack-scale Helios clusters, AMD’s stock showed some positive growth following the announcement. The performance-based warrant prices shares at just one cent each, with 160 million shares of common stock up for grabs, as Instinct GPU shipments are achieved. The initial tranche will vest following a 1-gigawatt shipment, scaling to the full six-gigawatts by 2031, if it aligns with a $600 stock threshold.

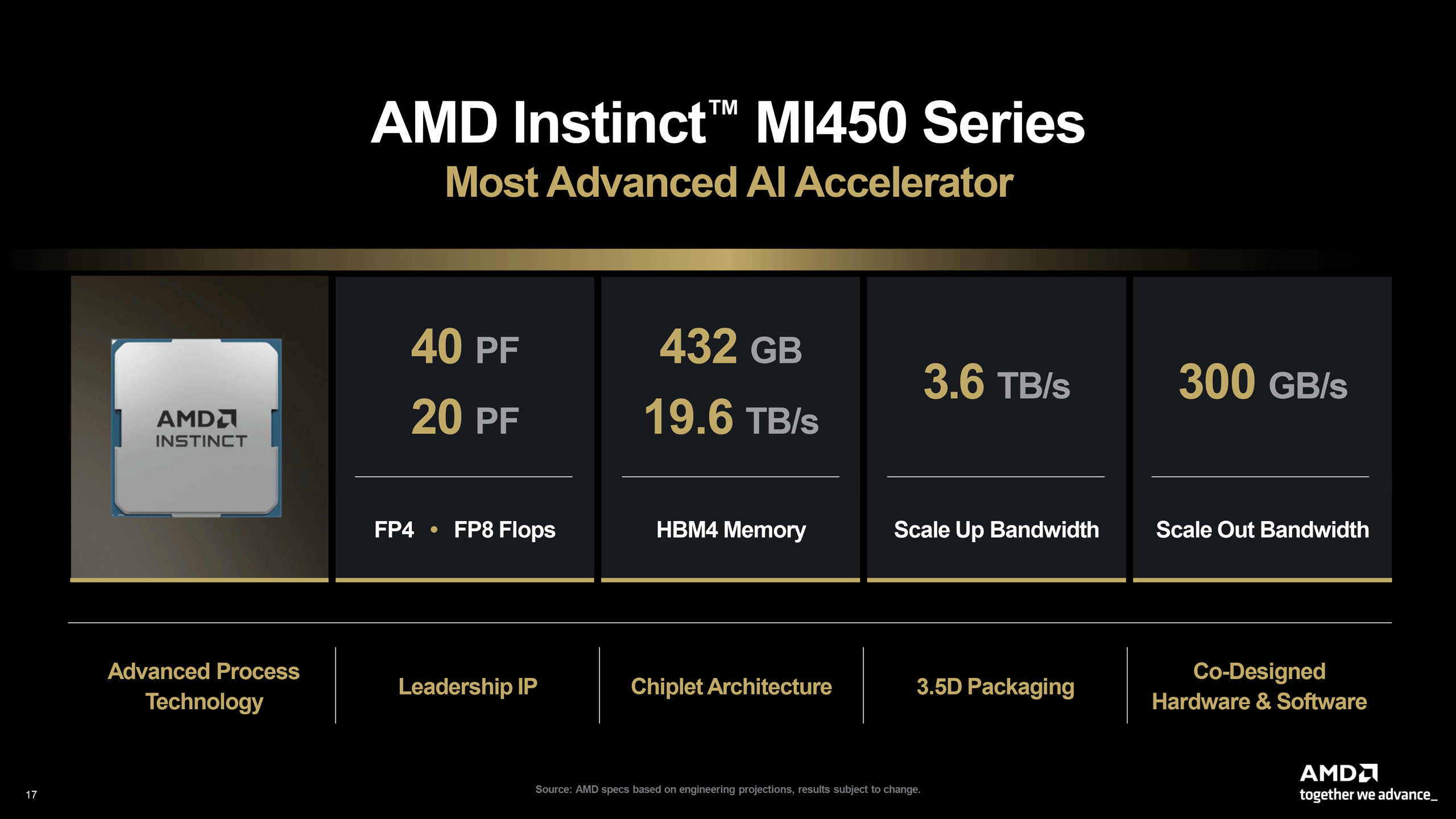

“Meta will be a lead customer for 6th Gen AMD EPYC CPUs, codenamed “Venice,” and “Verano,” a next-generation EPYC processor designed with workload-specific optimizations to deliver leadership performance-per-dollar-per-watt.” AMD’s statement says. Following the announcement, AMD discussed the deal in a conference call. CEO Dr. Lisa Su said that AMD plans to ship a custom MI450-based accelerator, optimized for Meta’s workloads specifically, with the first deployment of its hardware expected in the second half of 2026, with the hardware itself currently in a hardware and software validation phase.

“The Meta deployment is expected to generate data center AI revenue of significant double-digit billions of dollars per gigawatt. Revenue will begin in the second half of 2026 and ramp alongside our MI450 deployment with other customers,” said Jean Hu, CFO at AMD.

During a Q&A in the call, Vivek Arya at BofA Securities raised an important point: If demand is so strong for AMD’s AI accelerators, why is the company effectively giving away equity to secure orders? This isn’t the first deal of its kind, almost exactly mirroring OpenAI’s deal from October 2025. Su argues that such deals are beneficial for AMD shareholders, as it guarantees a certain level of earnings to show off to shareholders, and help AMD shape its own roadmap, as the companies co-develop hardware, and will continue to do so for the duration of the deal.

“So if you look at the structure of our warrants in this case, is — again, it’s a very aligned incentive structure. Meta is making a big bet on deploying at large scale for AMD, which is great. AMD benefits from this large-scale deployment, which brings revenue scale, ecosystem maturity, and software maturity. And assuming that we satisfy all of the purchases as well as the share price thresholds, AMD shareholders will benefit significantly, and Meta gets to benefit as part of that.” Su said.

In short, in exchange for equity, AMD gets to deliver a certain guarantee of orders, and in turn, value for its shareholders. Earlier in the call, Su noted that the deal would help AMD’s long-term financials. In particular, the company aims for 80% of its Compound Annual Growth Rate (CAGR) to be attributed to data centers and AI. Once that goal has been achieved, the company could equate a $20 value per-share to its data center efforts, which AMD aims to achieve before 2031.

This stands in stark contrast to Nvidia, which is not offering up equity for orders — the customers line up anyway. If AMD delivers on its commitments with both Meta and OpenAI, it will have deployed 12 gigawatts in compute and have given up 20% of the company in return for locking-in AI accelerator buy-in. As long as the company’s shares rise to $600 by 2031, that might become a new reality for one of the chip industry’s longest-standing companies.